Layered or faceted navigation is one of the most common causes of duplicate content and it is well-known for causing frustrating issues for SEOs due to over-indexation and increased levels of low quality pages. The main reason I’m writing this article, as well as to help Magento developers and site owners, is because I get asked about this at least once a week (via emails) and I decided it’d be easier to provide people with a guide to what they should and shouldn’t be doing.

Layered or faceted navigation is one of the most common causes of duplicate content and it is well-known for causing frustrating issues for SEOs due to over-indexation and increased levels of low quality pages. The main reason I’m writing this article, as well as to help Magento developers and site owners, is because I get asked about this at least once a week (via emails) and I decided it’d be easier to provide people with a guide to what they should and shouldn’t be doing.

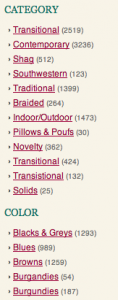

Filter pages are a huge issue for Magento retailers, because it’s so accessible within the platform and it’s great from a user experience point of view. Magento also helps by using attributes in the out of the box functionality.

There are lots of ways of dealing with faceted navigation (as I’m going to refer to it as in this post) in Magento, some of which are right, some of which are wrong – I’m going to go through each option in this post and explain why they’re a good or bad idea from an SEO perspective.

1. Use the canonical tag (GOOD OPTION)

The canonical tag represents the obvious option if you’re launching a new website or just adding filtering onto an existing ecommerce website, however it’s not as good if you’re trying to get the pages out of the index. I’ve found that Google often takes months before taking note of a newly added canonical tag and you won’t be able to do removal requests became you won’t meet Google requirements (that the page is either serving a 404 error, has a noindex tag on the page or it’s disallowed in robots.txt).

The canonical tag will also pass value to the page that’s being canonicalised to, which is especially beneficial if your filter pages have links to them, as the value will be passed to the primary versions of the pages.

The canonical tag should ALWAYS be present though, even if you’re using another method as well, as it helps to prevent issues with query strings and parameters from causing over-indexation problems and it helps to maintain link value. You can find out more about why the canonical tag is fundamental for Magento in this guide to Magento SEO I wrote a few months ago.

VERDICT: Best option, but more so for a new website

—

2. Mask the filtered with static URLS and serve them to search engines (BAD OPTION)

For some reason, it’s really common for retailers to opt to simply rewrite the URLs of dynamic filter pages and then assume everything is fine because they look like static pages – this is not the case! Just because you’re making them look like static pages doesn’t change the fact that there are likely to be thousands of them (with the multi-facet combinations) and they’re low quality pages, because they don’t feature any unique content.

There are also far too many SEO’s recommending this option to Magento retailers – it doesn’t help the situation at all, it simply makes it harder to write rules for applying rules for canonical tags, meta robots rules etc.

Here’s an example: http://magento-website.com/radios/ < adding a £100-£200 price filter would make it http://magento-website.com/radios/price/100-250.html

The example above shows how a filter has been applied and the URL has changed, but the new page is dynamic and cannot facilitate for custom content or meta data, making it worthless from an SEO perspective (and detrimental).

VERDICT: Very bad idea

—

3. Meta robots tags (GOOD OPTION)

Defining rules for adding meta robots or x robots tags is my favourite method for a website that already has existing issues, otherwise it’s second to using the canonical tag. The meta / x robots option also, like the robots.txt option, meets Google’s requirements in order to submit a removal request via Webmaster Tools – which makes it easier to eliminate the issue (as you can manually remove the URLs from Google’s index)

Assigning noindex, follow tags will tell Googlebot not to index the page, but to continue following the links on the page, so you won’t run the risk of fragmenting crawlability, which can happen when using the robots.txt route.

The main benefit of using a noindex, follow meta robots tag is that the value can still flow through to other pages, as you’re telling Google to continue to follow the links but not to index the page itself.

—

4. Use the robots.txt (GOOD OPTION)

The robots.txt route is an option, as you can use the wild card to disallow different parameters or URL extensions, but you need to ensure that there’s a consistent section of the URL that will enable you to do a catch all. So for example if you’re wanting to block your price parameters, you could include the following:

Disallow: *price*

The main issue with using the robots.txt file is that the links on the pages won’t pass value and if the pages have any links, the value won’t be passed onto other pages because Google won’t access the page – in contrast, if you were to go with the meta robots noindex, follow option, Google wouldn’t index the page, but it would follow the links.

The robots.txt file does allow you to meet Google’s requirements to do removal requests, ##

—

5. Turn the pages into editable, static pages (GOOD OPTION)

Turning dynamic filter pages into static pages (with unique content and meta content) is by far the best option in my opinion, however it requires a lot of dedicated content writing resource and it can take a long time to add content to lots of existing issues.

The main benefit of doing this is that you’re extending your site architecture and creating very specific pages for long-tail queries, that most retail websites won’t have a targeted page for. In a lot of cases, depending on the platform, you will need to adapt the navigation to still allow filters on these pages (allowing you to make use of multi-faceted navigation), but these pages can be noindexed and the static pages can be linked to within the navigation.

The other option, depending on the technology you have and how good your developers are, is to inject your content onto the pages (again, an editable content area would be better) and set title tags and meta descriptions somewhere in the back-end.

—

6. Parameter handling in Google Webmaster Tools (OK OPTION)

Google introduced the parameter handling settings in Google Webmaster Tools to allow webmasters to tell them how their crawler should access different dynamic URL parameters. This tool has been getting more effective since it was first released and a lot of SEOs are using it as the primary option for dealing with layered navigation these days.

I’ve found that this method isn’t as effective as using meta robots (or the canonical tag if it’s a new website), but I always set the parameter handling alongside the canonical tag and often alongside meta robots too.

This is something that I’ve tested a lot and I personally and wouldn’t recommend it above some of the other options.

—

7. Nofollowing links to filter pages (BAD OPTION)

This method works and I’ve seen a lot of retailers opt to go with this option because it saves crawl budget – however it does come with issues that some of the other options don’t have, such as the following:

If you have external links to the pages or you accidentally link to the pages, search engines are going to index some the pages, as you’ve not actually given instructions for these pages. It can also look suspicious that you’ve blocked access to a section of your website.

—

Conclusion

I would recommend using the canonical tag if you’re just launching a website with layered navigation, whilst also setting instructions for Google via the parameter handling section of Webmaster Tools. However, if you’ve got an over-indexation issue you’re looking to resolve, I would recommend assigning noindex, follow tags meta robots or x robots tags, as these will make search engines act faster and also allow you to start submitting manual removal requests (as the pages will meet Google’s removal guidelines).

If you have any questions about layered / faceted navigation, please feel free to email me – [email protected].